Imagine looking at a floating image of your grandmother, speaking to you in real time, as if she’s sitting right across the table. No glasses. No headset. Just a clear, lifelike 3D presence in your living room. This isn’t science fiction anymore-it’s happening in 2026. Holography and 3D photography have moved past lab demos and movie special effects. They’re now real, functional, and reshaping how we see, communicate, and interact with digital content.

How Holography Actually Works (No Jargon)

Most people think holograms are just fancy 3D projections. They’re not. Traditional 3D movies use two slightly different images to trick your brain into seeing depth. Holography does something deeper: it recreates the actual light waves that bounced off the original object. That means every angle, shadow, and reflection behaves exactly as it would in real life. You can walk around a hologram and see the backside of a heart, a car engine, or a human face-just like you would in the real world. The magic lies in capturing interference patterns. In early holography, lasers split into two beams: one hit the object, the other served as a reference. When they met, they created a complex pattern of light and dark lines on film. Later, digital sensors replaced film, and computers started reconstructing those patterns into 3D images. Today, it’s even smarter. Systems use AI to predict how light should behave, then simulate it in real time. No more perfect lab lighting. You can now create sharp holograms using ordinary room light.PEAR-FINCH: The Game-Changer in Depth

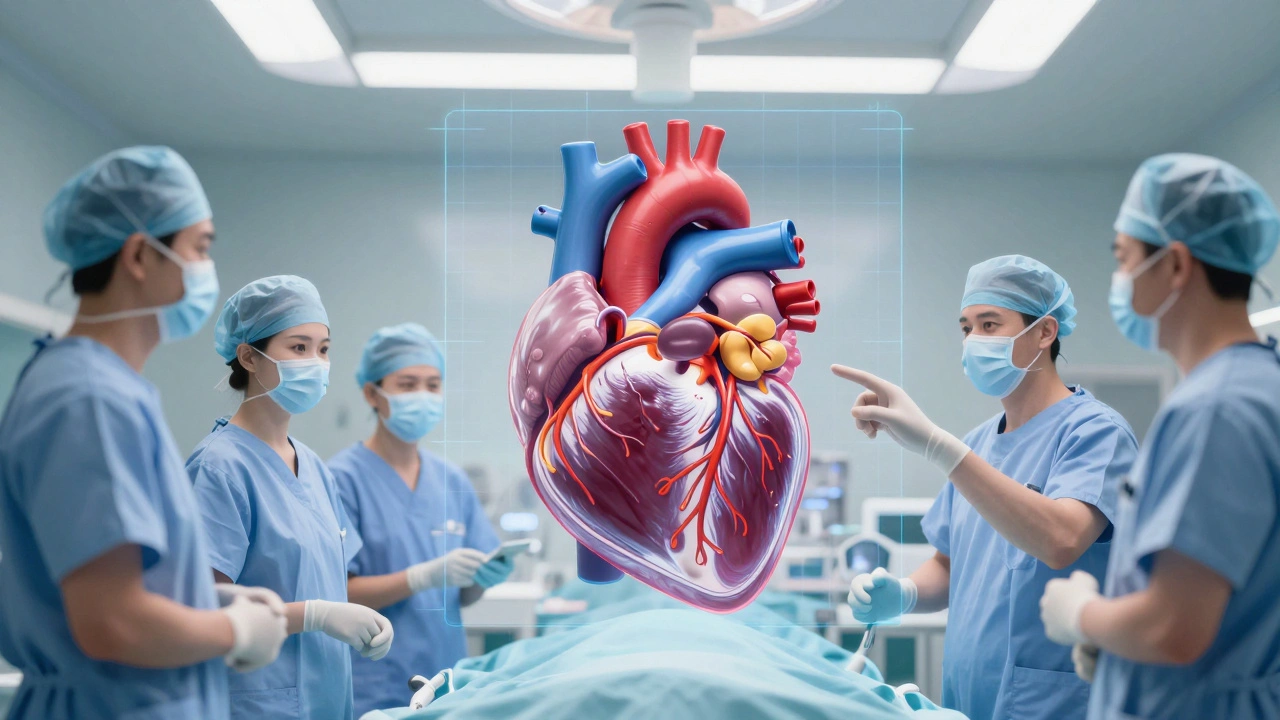

One of the biggest leaps in 2026 came from the University of Tartu in Estonia. Researchers led by Shivasubramanian Gopinath developed PEAR-FINCH is a computational holography method that allows post-recording adjustment of depth focus, improving clarity and usability in real-world conditions. Before this, once you captured a hologram, you were stuck with the focus level you set during recording. If the object was too far or too close, the image got blurry. PEAR-FINCH changes that. It works by taking multiple holograms at different focal distances during a single capture. Then, software combines them into one ultra-sharp image. Think of it like stacking photos taken at different focus points-but for 3D light. The result? A fivefold increase in depth of focus. That’s huge for medical imaging, where a surgeon needs to see a tumor in perfect detail from every angle. Or for microscopy, where a single cell might span several micrometers in depth. Now, you don’t have to guess the right focus before you record. You fix it afterward.Where Holography Is Already Making a Difference

This isn’t just theory. Holography is in use right now, and the results are changing industries.- Medicine: Surgeons in Tokyo and Boston are using holographic models of patient organs before operations. A 3D heart, reconstructed from MRI scans, lets them plan incisions with millimeter precision. Remote consultations now include live holographic walkthroughs of internal anatomy.

- Education: High school biology classes in Oregon and Finland use holograms to explore human anatomy. Students can pull apart a holographic lung, rotate a neuron, or watch blood flow through capillaries-all without touching anything. Studies show retention improves by 40% compared to textbooks.

- Retail: IKEA and Sephora have launched holographic product displays. Customers can see a virtual sofa in their living room, or test makeup on a floating 3D face. Retailers report up to 30% more customer engagement and higher conversion rates.

- Defense: Military command centers in Germany and South Korea use shared holographic maps for real-time tactical planning. Multiple officers can point, annotate, and move virtual units as if they’re physical objects on a table.

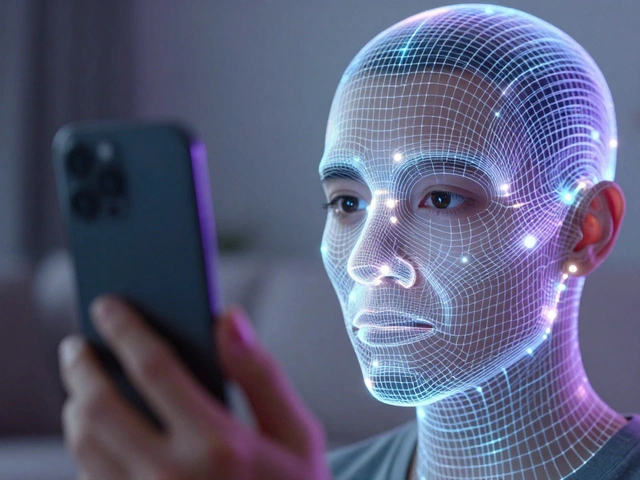

Holographic Smartphones Are Here

You’ve seen AR phones that overlay digital info on your camera view. Holographic smartphones do something different. They project actual 3D images into the air above the screen. No glasses. No headset. Just a floating image you can interact with. In 2026, the first consumer models launched from companies like HoloTech and Lumina. These phones use diffraction-based optics and light field displays to create images with true parallax. Move your head, and the image shifts just like a real object. A holographic video call? You see the person’s face from every angle. A 3D game? You can peek behind a character. Cloud processing helps too-complex rendering happens remotely, then streams to the phone in under 20 milliseconds. The catch? Battery life is still a challenge. A single holographic display can drain power 3x faster than a regular screen. And right now, there aren’t enough apps to justify the upgrade for most people. But that’s changing fast. Developers are building holographic versions of Zoom, TikTok, and even Google Maps. Imagine navigating your city with a floating 3D street map hovering above your palm.3D Films and the End of Glasses

Hollywood’s 3D movie craze died because of the glasses. People found them uncomfortable. The effect felt artificial. Holographic cinema is different. New cameras capture full light field data-every ray of light from every direction. Editing software then uses AI to reconstruct scenes with perfect depth and perspective. The result? A film that doesn’t just look 3D-it feels like you’re inside it. Films like The Last Horizon (2025) and Neon Memory (2026) are now being shown in holographic theaters across North America and Europe. No glasses. No screens. Just a floating scene that fills your peripheral vision. Directors are experimenting with new storytelling: scenes where the camera moves around characters in real time, or where the audience can choose their viewpoint. It’s not just cinema anymore-it’s immersive theater, gaming, and art combined.

Why This Matters More Than You Think

We’ve been stuck with flat screens for decades. Phones, TVs, laptops-they all force us to look at a surface. Holography flips that. It brings digital content into the real world. That changes how we learn, work, heal, and connect. The market is exploding. By the end of 2026, the global holography industry is projected to hit ₹18.4 Lakh Crore ($2.2 billion USD). That’s not just hardware. It’s software, content creation, sensors, AI engines, and new job categories. Holographic designers. 3D cinematographers. Light field engineers. These weren’t real jobs five years ago. The biggest shift? We’re moving from viewing digital content to experiencing it. No more scrolling. No more clicking. You reach out. You turn. You walk around. And the digital world moves with you.What’s Still Holding It Back

It’s not perfect yet. Two big problems remain:- Hardware complexity: Current holographic displays still need precise lasers, high-speed processors, and ultra-thin optical layers. Making them cheap, durable, and energy-efficient is a huge engineering challenge.

- Content creation: Creating a hologram isn’t like taking a photo. You need depth sensors, specialized software, and training. Tools are improving, but most creators still can’t easily make their own holograms.

What Comes Next

By 2027, expect holographic displays in cars, public transit, and even home mirrors. Imagine checking your reflection and seeing your calendar, weather, or messages floating beside you. Or walking into a store where products come to life with interactive demos. The line between physical and digital is fading. Holography and 3D photography aren’t just new ways to see things. They’re new ways to exist in a digital world. And in 2026, that world is finally starting to look real.Is holography the same as 3D movies?

No. 3D movies use two slightly different images to trick your brain into seeing depth. You still need glasses, and the image is flat-just with fake depth. Holography recreates the actual light waves from an object, so the image has real depth, parallax, and can be viewed from any angle without glasses.

Can I make a hologram at home?

Not with high quality yet. Basic holographic kits using lasers and film exist, but they require a vibration-free lab setup. For most people, the easiest way is to use apps that turn smartphone videos into holograms using AI-like HoloMaker or LightField Studio. These don’t create floating images, but they simulate 3D depth on screen. True holograms still need specialized hardware.

Do holographic smartphones really work without glasses?

Yes. Devices like the HoloPhone X1 and Lumina Holo use light field displays and diffraction optics to project actual 3D images into the air above the screen. You can walk around the image and see it from all sides. No glasses, no headset. It’s still early, and battery life is short, but the technology works.

Why is PEAR-FINCH such a big deal?

Before PEAR-FINCH, once you recorded a hologram, you couldn’t change the focus. If the subject was too close or too far, the image was blurry. PEAR-FINCH lets you capture multiple depths at once, then combine them afterward. This means you can fix focus after recording-something no other system could do. It’s like having a camera that lets you adjust focus after you take the picture, but for 3D light.

Are holograms only for high-tech industries?

No. While medicine and defense use them first, consumer applications are growing fast. Retail stores use them for product demos. Schools use them for teaching. Even musicians are performing live holographic concerts. As hardware gets cheaper and software easier to use, holograms will show up in homes, cars, and public spaces.